Achieving expert-like robotic task execution in dynamic environments typically requires extensive, high-quality expert demonstrations, a significant bottleneck for real-world deployment. We present a novel learning framework that overcomes this data dependency, enabling robots to perform complex periodic tasks with expert-like proficiency, even when learning from naive demonstrations.

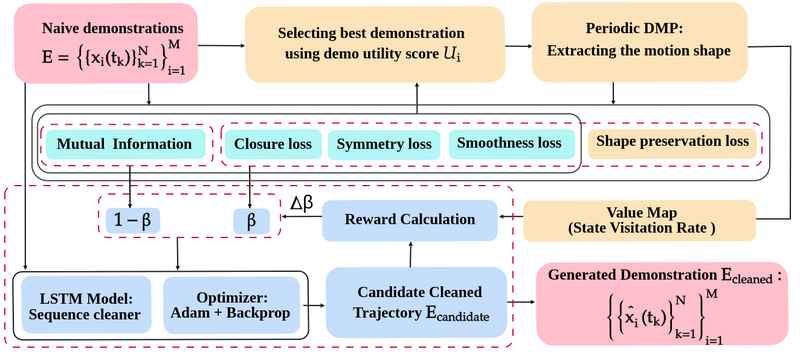

Our two-stage pipeline first selects a representative demonstration based on user-defined information-aware task intention scores. This single best demo is then used to extract a canonical motion shape via Periodic Dynamic Movement Primitives (DMPs). Finally, a Long Short-Term Memory (LSTM) network refines the entire set of demonstrations, leveraging a multi-objective score that combines the canonical shape with mutual information and other task quality metrics.

The proposed approach is demonstrated on a Franka Research 3 robot performing phasic tasks across three contrasting domains: wiping in human assistive services, weaving in the textile industry, and pick-and-place operations for warehouse automation. Code available at: https://github.com/FocasLab/ICRA-IL-2026.

We employ a multi-objective scoring mechanism derived from task-intentional prompts (Do's and Don'ts of the task). The Demo Utility Score U(xi) quantifies the quality of each raw demonstration based on mutual information between states and actions, as well as kinematic and geometric characteristics including symmetry, closure, and smoothness losses.

The expert-like trajectory is selected using the demo utility score, and Periodic Dynamic Movement Primitives (DMPs) generate a canonical reference for the desired motion. Rollouts from the learned Periodic DMP estimate a state visitation value map over the task space using kernel density estimation (KDE).

A Long Short-Term Memory (LSTM) network refines the entire set of demonstrations by learning trajectory corrections. The LSTM leverages the multi-objective score combining the canonical shape with mutual information and task quality metrics to produce expert-like trajectories from imperfect demonstrations.

Our approach achieves expert-like performance on complex periodic tasks, even when trained on as few as four demonstrations.

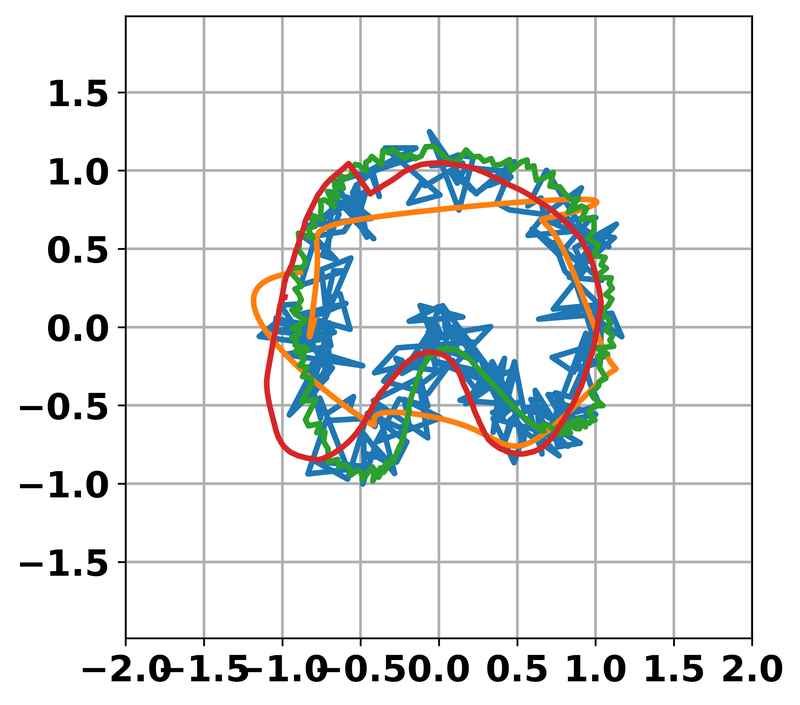

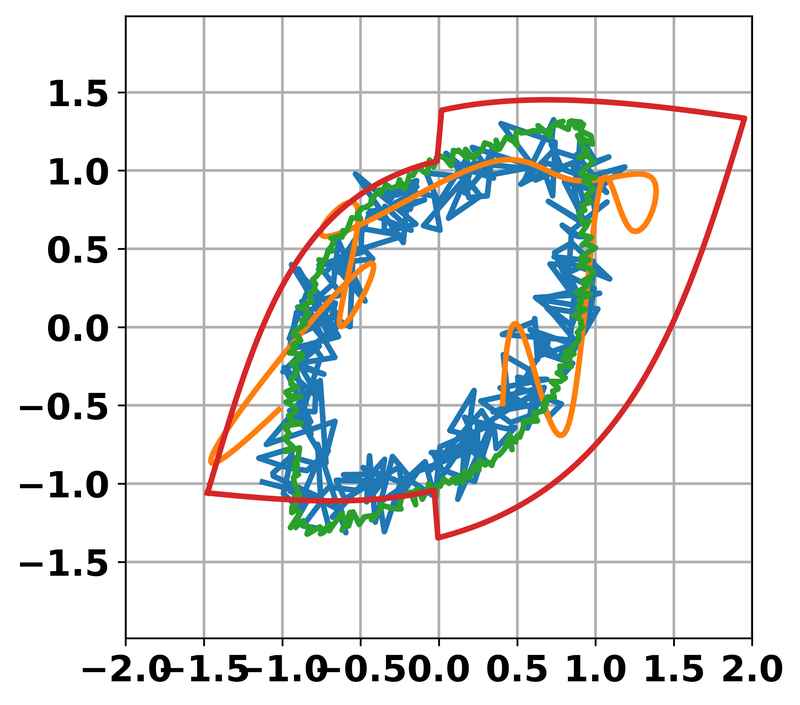

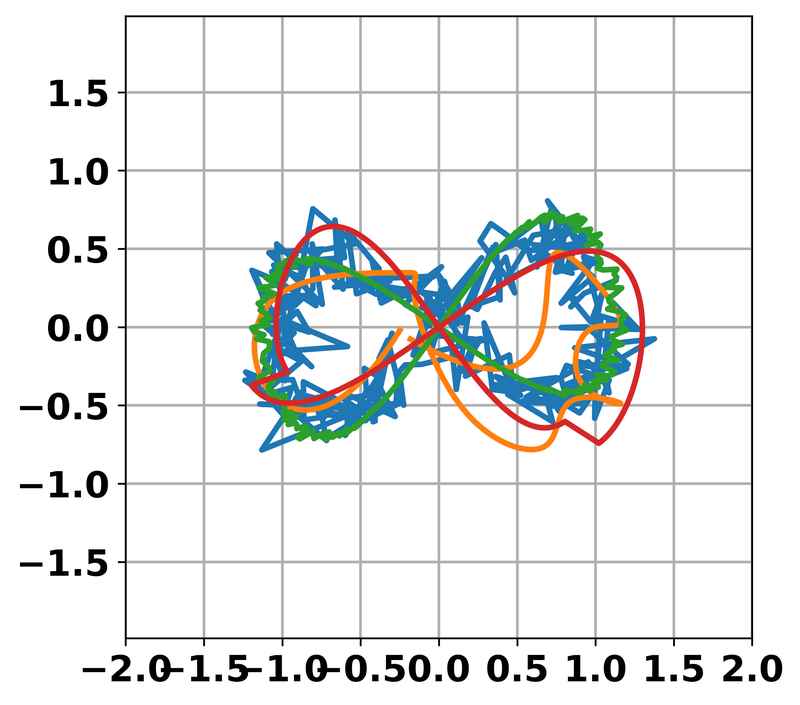

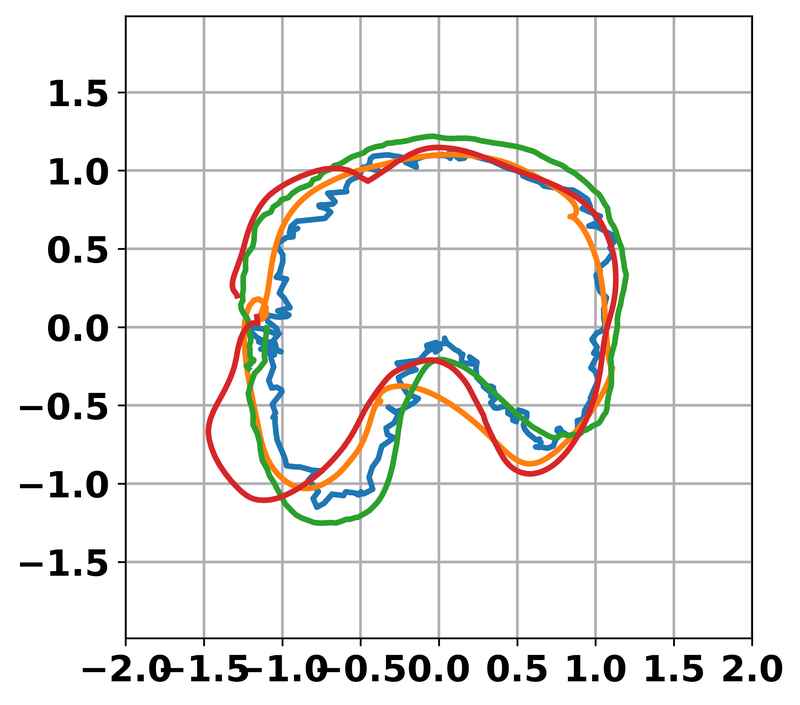

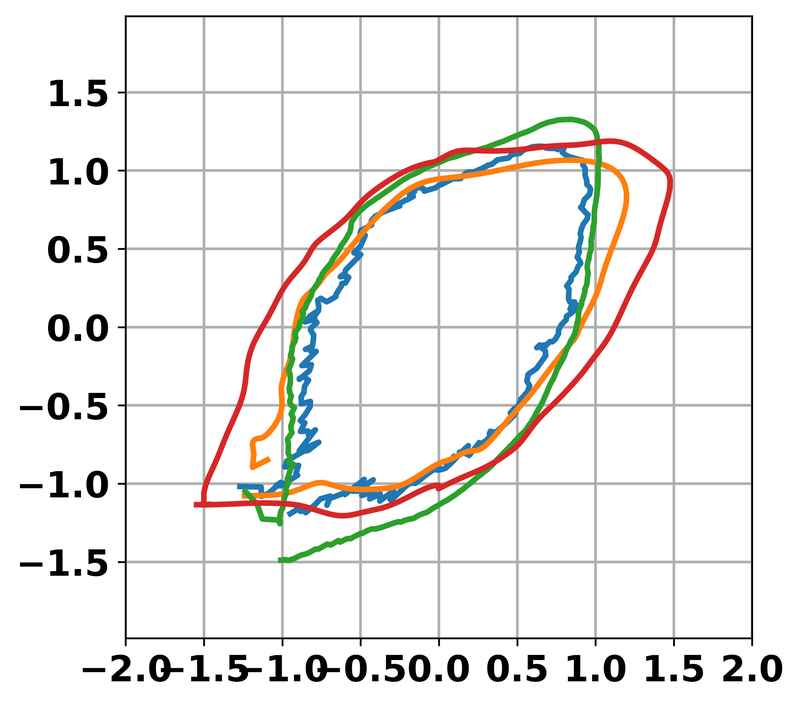

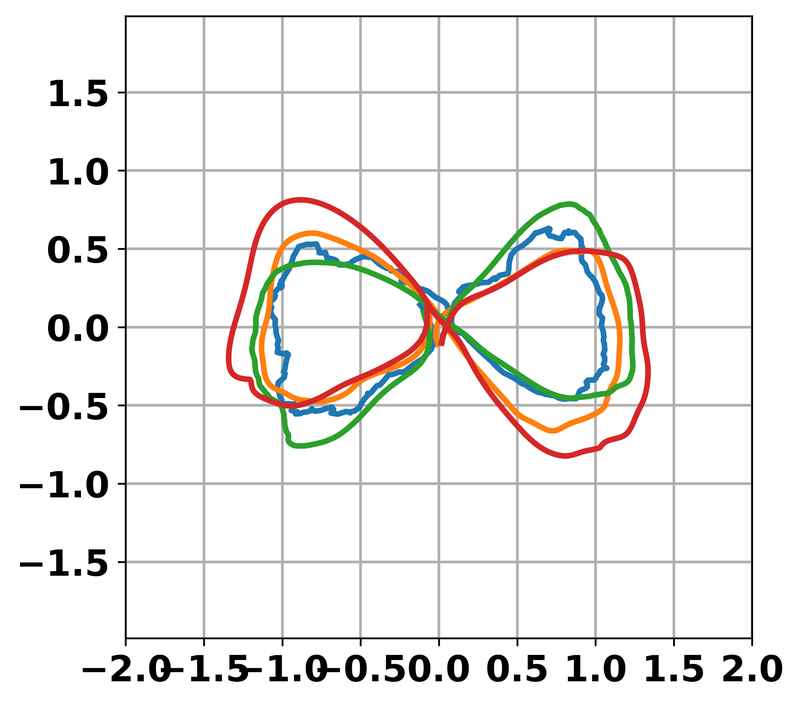

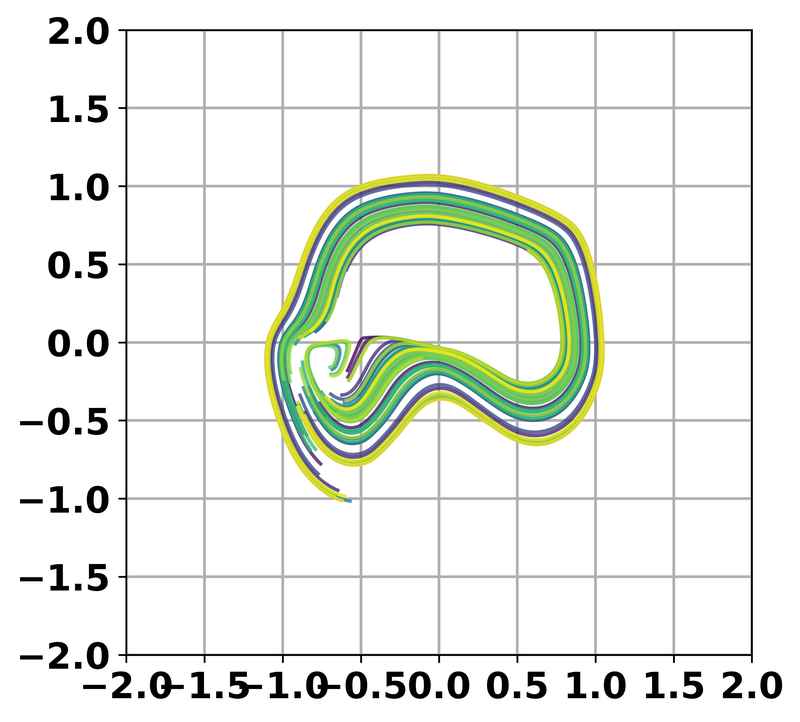

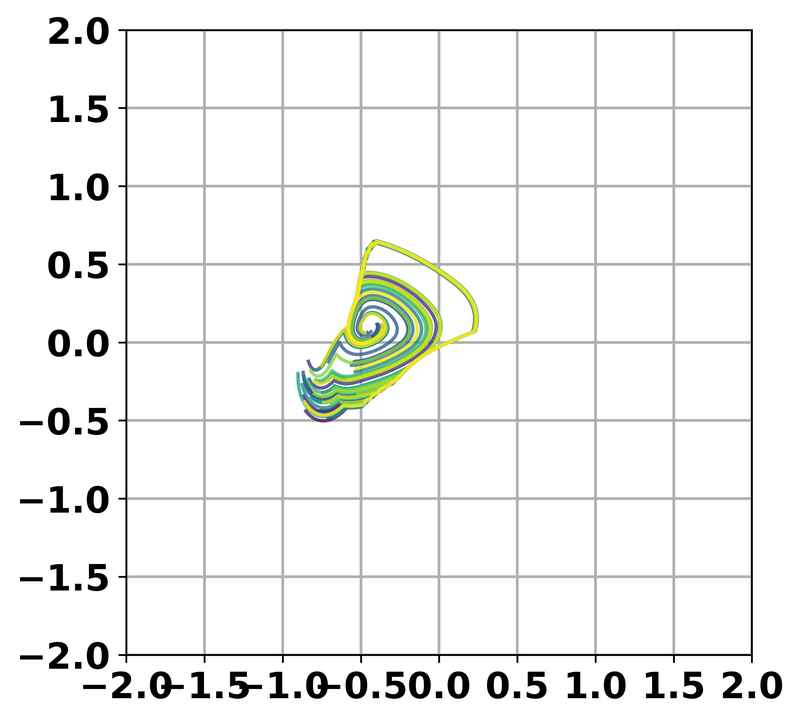

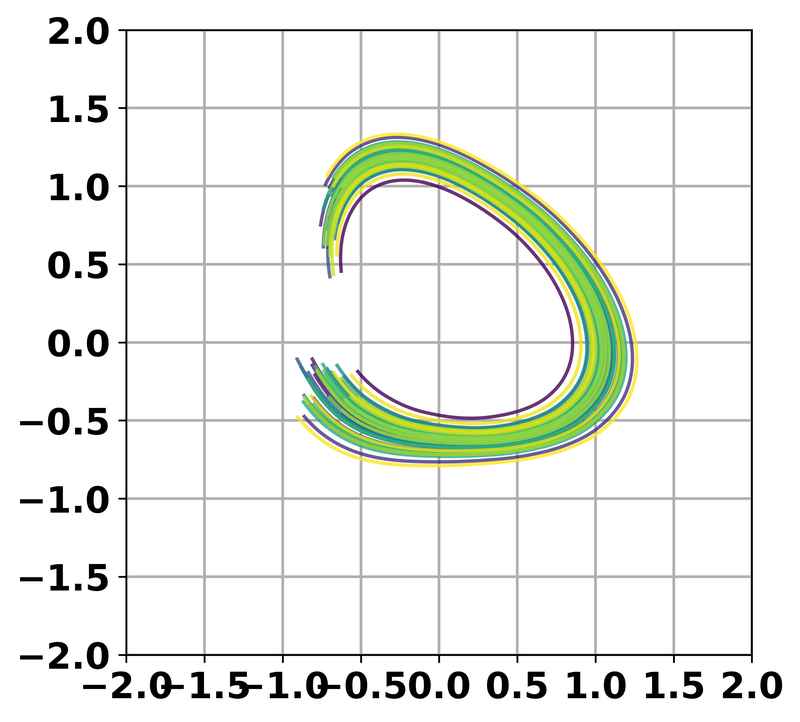

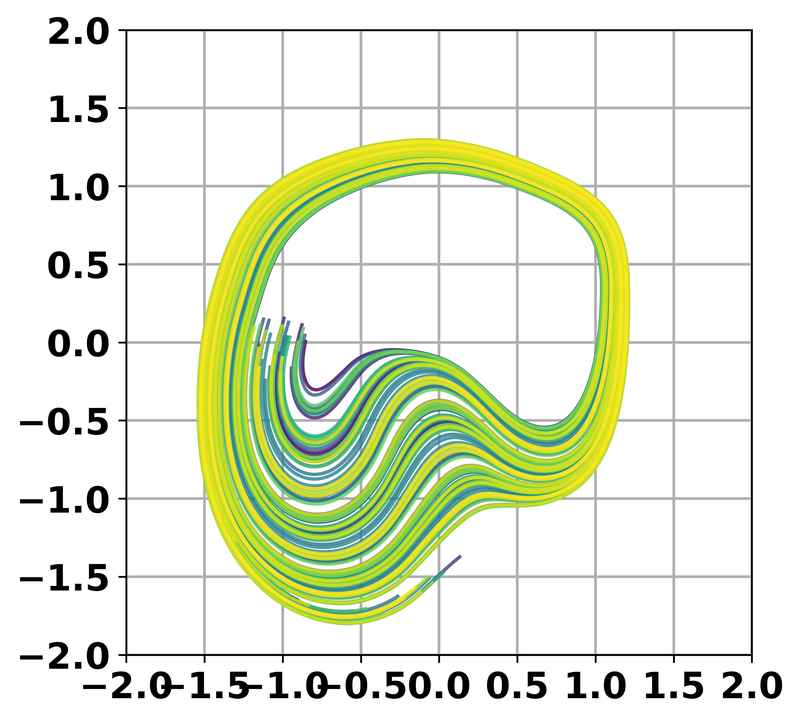

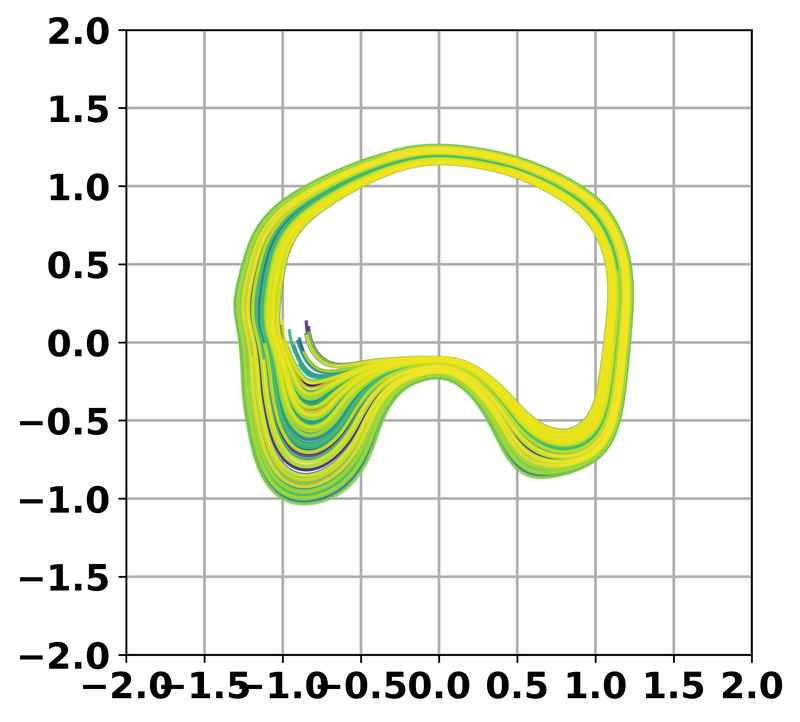

Comparison of noisy raw demonstrations versus cleaned demonstrations produced by our two-stage pipeline across three periodic task domains. Trajectory projection visualized in the XY plane.

Wiping Task (WP)

Pick-and-Place Task (PnP)

Weaving Task (WV)

Wiping Task (WP)

Pick-and-Place Task (PnP)

Weaving Task (WV)

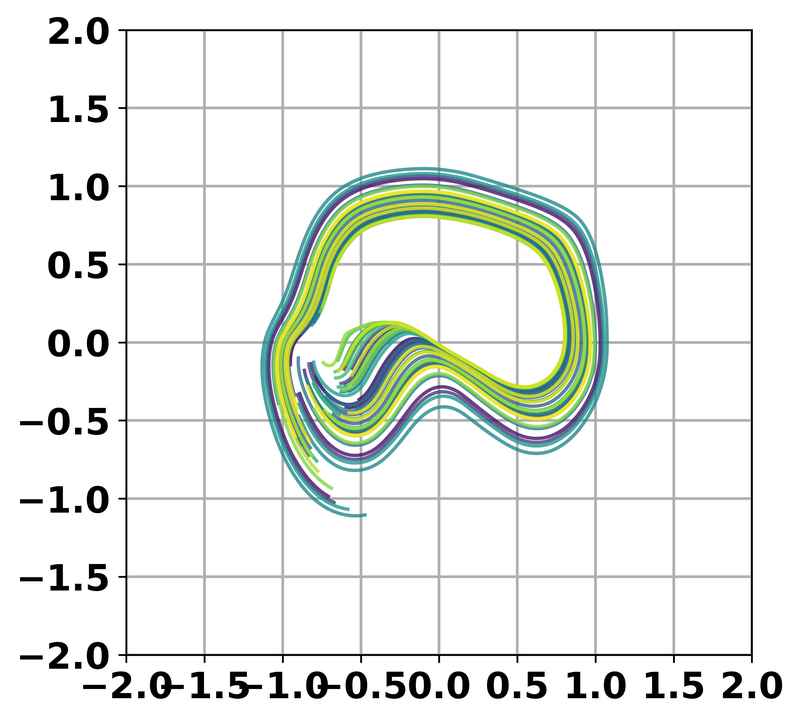

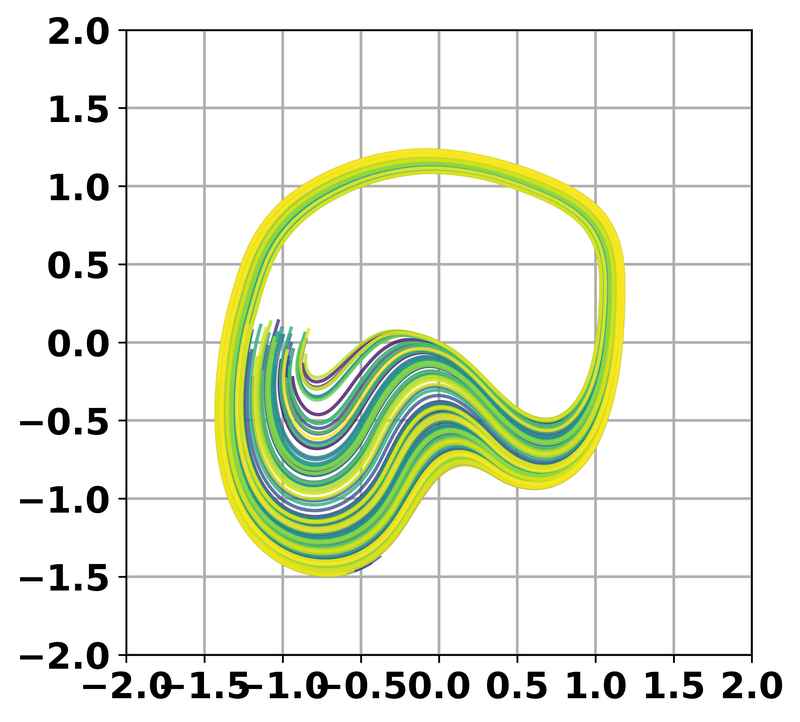

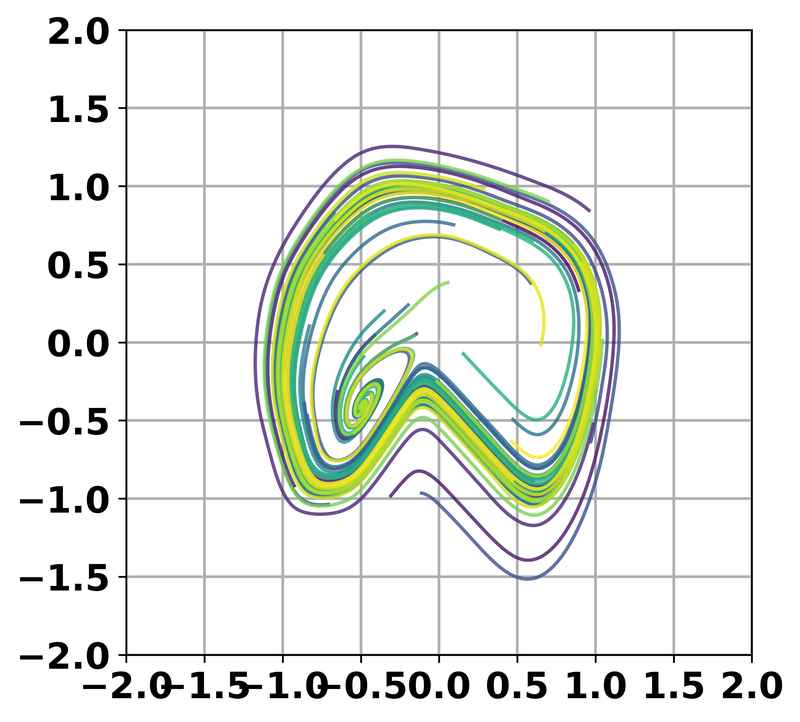

Comparison of rollout trajectories from four imitation learning models trained on noisy and cleaned demonstration data.

BC

Traj-BC

ILEED

Neural-ODE

BC

Traj-BC

ILEED

Neural-ODE

@inproceedings{saharshsonkar2026beyond,

author = {S. Saharsh and Shubham Sonkar and Pushpak Jagtap and Ravi Prakash},

title = {Beyond the Teacher: Leveraging Mixed-Skill Demonstrations for Robust Imitation Learning},

booktitle = {IEEE International Conference on Robotics and Automation (ICRA)},

year = {2026},

}